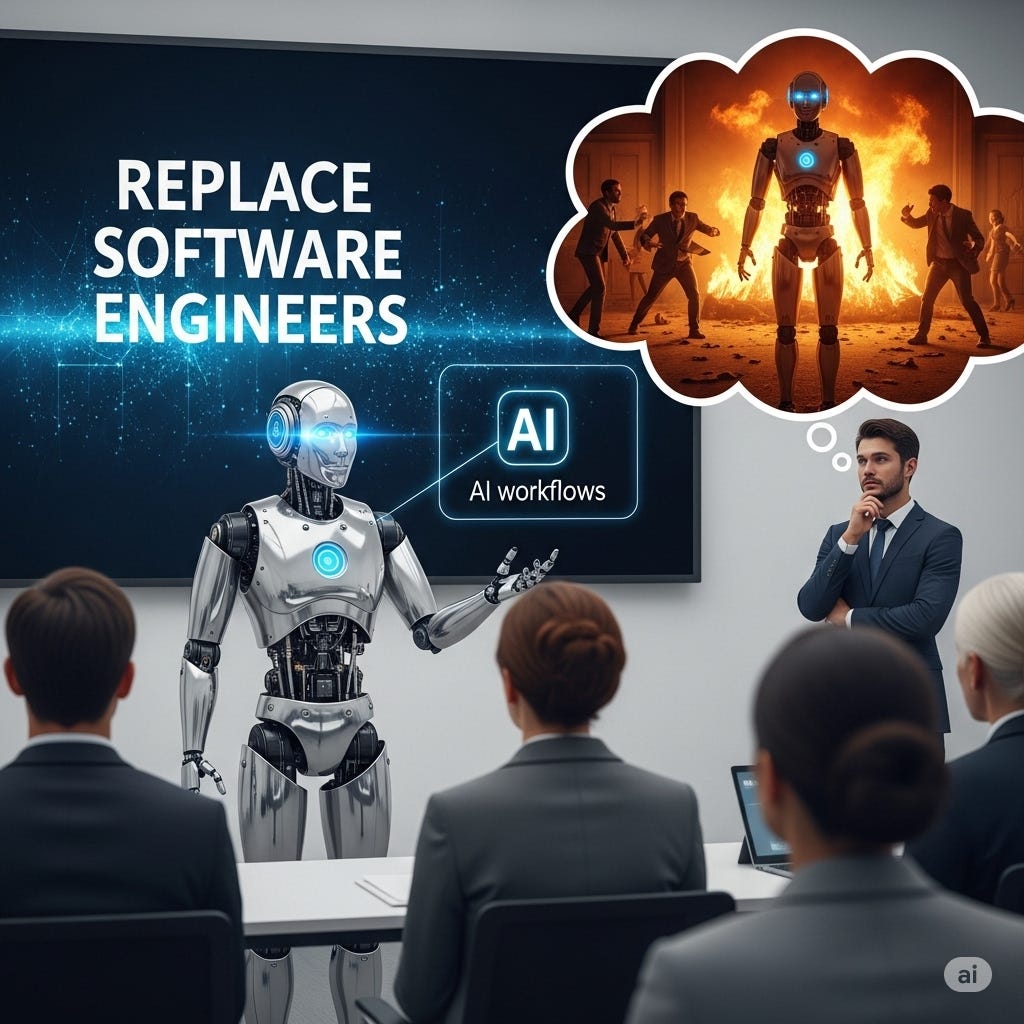

Warning to CEOs: The AI You Are Being Told Can Replace Engineers, Designers, and Researchers Is More Likely to Bankrupt You Than You Think

The generative AI hype train that is raging across the corporate world claims that many white collar jobs will soon be replaced by AI. Lets explore some of the hidden costs they don't tell you about!

There is a narrative raging across the business world right now that I am sure everyone is aware of at this point:

“LLMs and generative AI have the power to automate the work your employees are doing!”

While this narrative is not wrong on its face- after all there are many domains of work where this type of automation is likely already happening- a lucrative fantasy is being sold alongside it which claims that ‘design’, ‘problem solving’, and ‘research’ oriented work will very soon be included in the list of domains that can be significantly automated. So soon in fact that you probably need less people in those domains working at your company already!

For those who have been pitched the lucrative fantasy described or are themselves pitching it to business leaders with some amount of honest belief, I bring you this warning:

The perceived ROI of a generative tool for a non-trivial problem is inversely related to the reality of that tool’s ROI.

The belief that LLMs can generate functional project-coherent code, a perfect design, or a new discovery from only some thoroughly developed prompts and coordinating infrastructure is ludicrously easy to pitch compared to the effort required to successfully build such a system.

Generative automation flows are dangerously deceptive in this way. This is because building the prototype of a generative tool is extremely easy- there are a plethora of generative tools already developed to develop other generative tools. Now that might sound to a non-technical person like it directly contradicts the point I am making- but it in fact illustrates perfectly the type of deception I am referring to.

In a thirty minute or hour long demo environment an auto generated automation prototype can be shown solving many novel yet complicated business specific problems. In fact it will appear (and be pitched to you) as a very straight-forward-to-implement yet extremely cheap to run tool that can be tailored for and scaled to your business’s needs.

Wow! Sounds great!

But what these demos fail to convey (or outright hide) is just how difficult it can be to get these kinds of systems to overcome the real world problems presented by even just 1% of your expected input space. Generating an incorrect output during any single step in the proposed tool often leads not to small failures- but catastrophic ones. Worse still, each time you tweak a prompt or change the series of steps the tool performs the exact section of the input space that 1% is made up of can change drastically.

Consistency and accuracy are critical to these kinds of tools actually being at all useful in a business environment. If the tool is not proven to correctly handle even just that 1% of a section of the input space- that tool doesn’t just go from 100% effectiveness to 99% effectiveness- it usually goes from 100% effectiveness to 0% effectiveness.

Generative tools built for non-trivial tasks have an often unconsidered, yet massive, hurdle to overcome:

If you cannot trust that the system autonomously generates a valid output 99.999% of the time, then you cannot know with certainty that ANY of its autonomous outputs are valid without extensive output validation and/or a step-by-step analysis of the input that traversed to the provided output.

Any mistake introduced along the generative flow can end up being propagated through several other generative layers- which essentially corrupts the output produced and in many cases makes a post-output analysis extremely difficult to correctly diagnose. Even the smallest and often most trivial mistakes can effectively eliminate any chance the system had to arrive at a result that can be proven to be within the desired output space.

In order for any of those flashy demos you may have watched (or pitched yourself) to become a mature tool that your business can actually use, the business will need to pay one or more people to:

Develop prompts which can be proven to accurately and consistently provide the entire desired output possibility space for the entire expected input possibility space.

Build infrastructure that allows the end-to-end expected input possibility space to be ingested and traverse two or more systems using the prompts described in #1 to accurately and consistently produce the end-to-end desired output possibility space.

Create step-by-step output space validation infrastructure which can be trusted to identify with a business worthy amount of certainty whether anything produced by the system is actually in that desired output space.

Ensure that the system will be able to over some meaningful time horizon remain consistent and accurate for your business needs.

Support updates, debugging, scalability, and security. (There are so so so many overlapping security domains one must consider for generative tools!)

Prove that the initial infrastructure costs + overall token costs + maintenance costs are less than the cost to just have a salaried human do the work from input to output.

Turns out transforming any amount of English into a desired piece of code, design, or discovery is a non-trivial problem past a relatively small threshold of task complexity. No matter the intelligence of the system that is processing the English you provide it, the business-required-consistency the actual input space to the actual desired output space must have is not confirmed by a piece of code, design, or discovery appearing to be correct;

It is confirmed when it has been proven to be correct!

Lets focus on the problem of generating software. You can describe all the code you want with as much depth as you can possibly express- but until that description is implemented in a Turing complete language and has an adequately expansive desired input space validated against an adequately expansive expected output space- that business-required-consistency has not yet been proven.

This is because the number of known and unknown ambiguities in your English description of the desired software to be generated increases exponentially as you linearly increase the ‘content’ of that description.

For example; you should never trust the integrity of a piece of software based solely on tests an LLM wrote for that software. No matter how complicated or precise you believe your test generation prompts or generative infrastructure to be- if you do not have adequate comprehension and visibility into the workings of that software (and the tests themselves!) you cannot know that system will not fail catastrophically in even some of the most trivial real world input spaces.

If you don’t know why that is the case- that’s ok. But you need to understand that it is a strong indicator you do not have the skills to properly anticipate the kind of non-trivial problems that will need to be overcome if you intend to deploy these kinds of systems in your business.

The hardest coding problems aren't hard because engineers don’t know what they need to do; the hardest coding problems are hard because at no point over the course of an input’s traversal of the system can any constraint contradict another constraint.

This 'constraint of constraints by other constraints' is what creates non-trivial system complexity- whether that system is a software solution, design implementation, or research focus. This explosion of complexity occurs when you transition from a problem statement like:

Build me a nice looking website that allows users to log in and read their personal data from a database for this small business with a couple thousand customers.

. . . to this kind of problem statement:

Build a website according to my business requirements, uptime needs, and performance expectations. It needs to do X, Y, Z, and Q seamlessly and without any security flaws. You need to be able to deploy and scale it to millions of users on a plethora of devices. Also, it needs to align with the business’s evolving needs for the next 4+ years.

A freshly graduated junior engineer, one of the countless generative website tools, or just an LLM could likely be given ‘okay-ish’ documentation and from 0 to 100 create an adequate solution for the first problem statement. But in the second problem statement it doesn't matter how detailed and exhaustive the documentation you provide to a generative tool (or junior engineer) is;

If the documentation is not Turing complete (which plain English is not) there will always be aspects of the code that you cannot anticipate and explicitly address within the documentation due to the nature of how 'constraints of constraints by constraints' exponentially increases the complexity of a problem even for a seemingly small input and/or output space.

Therefore, when trying to replace software engineers with generative coding tools you better be able to know with a massive amount of certainty that your generative tool will be able to solve even tiny problems using only the current code base and the documentation that ambiguously covers (or incorrectly covers) said tiny problem.

Oh, and I almost forgot- it also needs to be able to fix whatever bugs it introduces after it ‘fixes’ that tiny problem.

Generative tools can appear as a golden goose that can reduce your labor costs immensely but they also can become a bottomless pit of investment since the problems these systems will need to overcome can from the onset seem much simpler than the actual complexity involved in solving them.

Which, might I point out, has been a staple of coding, design, and research work for way longer than AI has been around- and why you usually need a bunch of smart tenacious humans to attend to these tasks!

There are many places where such tools are appropriate and I will admit that the engineering problems discussed aren’t impossible in many cases! But any business leader who thinks they will be able to replace a portion of their human labor with these tools needs to be aware of the amount of human labor required to even get to a system that can probably do the work. Not to mention the work needed to prove any output can even be trusted.

In conclusion;

To build, maintain, and sustain the kinds of generative tools the ‘problem-solving’, ‘design’, and ‘research’ work domains require won’t be as easy as it can seem. The fantasy of your company’s software engineers, researchers, and countless other ‘decision making’ roles being easily replaced by generative tools is in my view a recipe for a very expensive lesson in just how ‘complex’ complex problems can be.